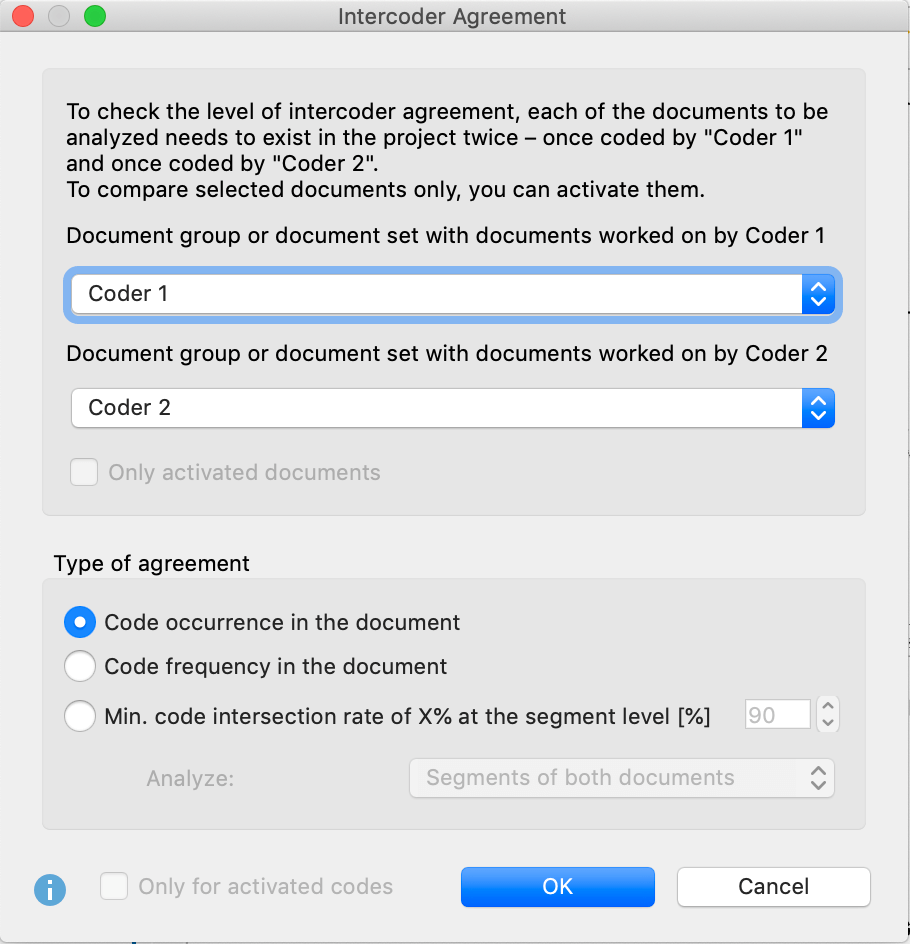

In the Coding Videos section, you’ll find detailed explanations for how to code videos. Videos are analyzed and coded in a separate window, the “Multimedia Browser”. You cannot select multiple cells at the same time. As soon as the cell shows an orange frame, you can select text in this cell with the mouse. To select a text in a table, double-click the cell. The edges of the frame can be adjusted later by clicking and dragging the corners. We'll return to some of these questions in the next MAXQDA video, where I'll show you how I like to compare coding as part of the codebook development process.In image files, you can drag a frame with the pressed mouse button in the same way as in PDF documents. That is to say how you read and make sense of the data after coding. Kappa doesn't ensure consistency in anything other than code application. In my experience using two coders throughout a project is a better way to ensure that you have consistently coded data. In summary, I'm not a big fan of reporting kappa statistics. Both kappa values range from 0-1, where higher scores indicate better agreement. In addition, Cohen's kappa makes it more difficult for rare codes to reach acceptable levels of agreement, which is not true of Scott's kappa. For this reason, I prefer to use Scott's kappa, it estimates chance agreement at 50 percent rather than it being a function of the frequency in the existing sample. Evaluation of chance would more likely reflect that we're applying the code with or without reading the text. As qualitative researchers, we're not basing our application of the code on some underlying knowledge of what might exist in the text. This assumption however, makes little sense in qualitative research. If they're reviewing lung x-rays, it makes sense that a student and expert radiologist would both be less likely to diagnose an extremely rare condition than a more common one. There's an assumption that both assessments would be based in part on knowledge of the prevalence of a particular condition within the population. This makes sense given it's origins as a method of comparing medical students ability to diagnose conditions vis-a-vis expert diagnoses. It estimates chance agreement based on the observed distribution in the sample. There are several different kappa statistics, but the most commonly used one is Cohen's kappa. If you don't pre-segment the text, you'll have to use some other unit of analysis like lines or characters to measure the no no agreement. In this image, how many times did coders 1 and 2 agree that the code applied here once or twice? Or did they only agree about half the time in their coding of paragraph 2? It's even more difficult to say how many times they agreed that the code didn't apply.

If you don't, you won't easily be able to say how many times coders agree, and it's especially difficult to count how many times neither coder applied the code. If you pre-segment the data, you have one-to-one comparisons between coders. This is actually quite difficult to do if you don't pre-segment your data before coding it. The calculation requires data for a two-by-two table, where you count the number of times that both coders applied the code, neither coder applied the code, the first coder applied the code, but the second one didn't, and vice versa. It's important to know what kappa statistics measure however. Kappa is the most commonly reported statistic measuring agreement. Yes, it's extremely important that codes are applied consistently in qualitative analysis projects, but accurate code application indicates nothing about how you then read and interpret the coded data after the coding process. In general though, I'm quite cautious about trying to quantify levels of agreement, particularly as a measure of rigor and analysis. It's actually quite important for making sure that individual analysts are on the same page in their understanding of what codes are meant to capture and why. Janice Morse for instance, in a 1994 article stated, "No one takes a second reader to the library to check that indeed he or she is interpreting the original sources correctly, so why does anyone need a reliability checker for his or her data?" I argued in the last video that assessing agreement is a key process for codebook development. More broadly in qualitative research, this is quite a polemic issue with some researchers arguing that intercoder reliability is essential, and others suggesting that it's ridiculous. As a result, some researchers argue that it's important to report statistics that quantify the level of agreement between coders as an indicator of rigor in analysis. In public health and other related disciplines, quantitative approaches tend to be the dominant paradigm.

Finally, I'd like to say a few words about intercoder reliability.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed